Harness Engineering — The Real Engineering Behind AI Agents

When an LLM fails a task, sometimes it's not a capability problem — it's a missing harness. Prompt Engineering is fading, Context Engineering is just a way station; what really shapes AI Agents is the system around the model.

Not long ago, OpenAI did something interesting: they assembled a 3-person team (later 7), and used AI to build an actual production system from scratch. The only rule: engineers were not allowed to hand-write a single line of code.

Five months later, the system had grown to nearly 1 million lines of code, and the team’s productivity was roughly 10× that of pure human development.

That sounds like an “AI replaces engineers” victory lap — but the name OpenAI gave their method is telling. They didn’t call it AI Coding. They called it Harness Engineering.

A harness, like the kind you put on a horse.

What Is a Harness

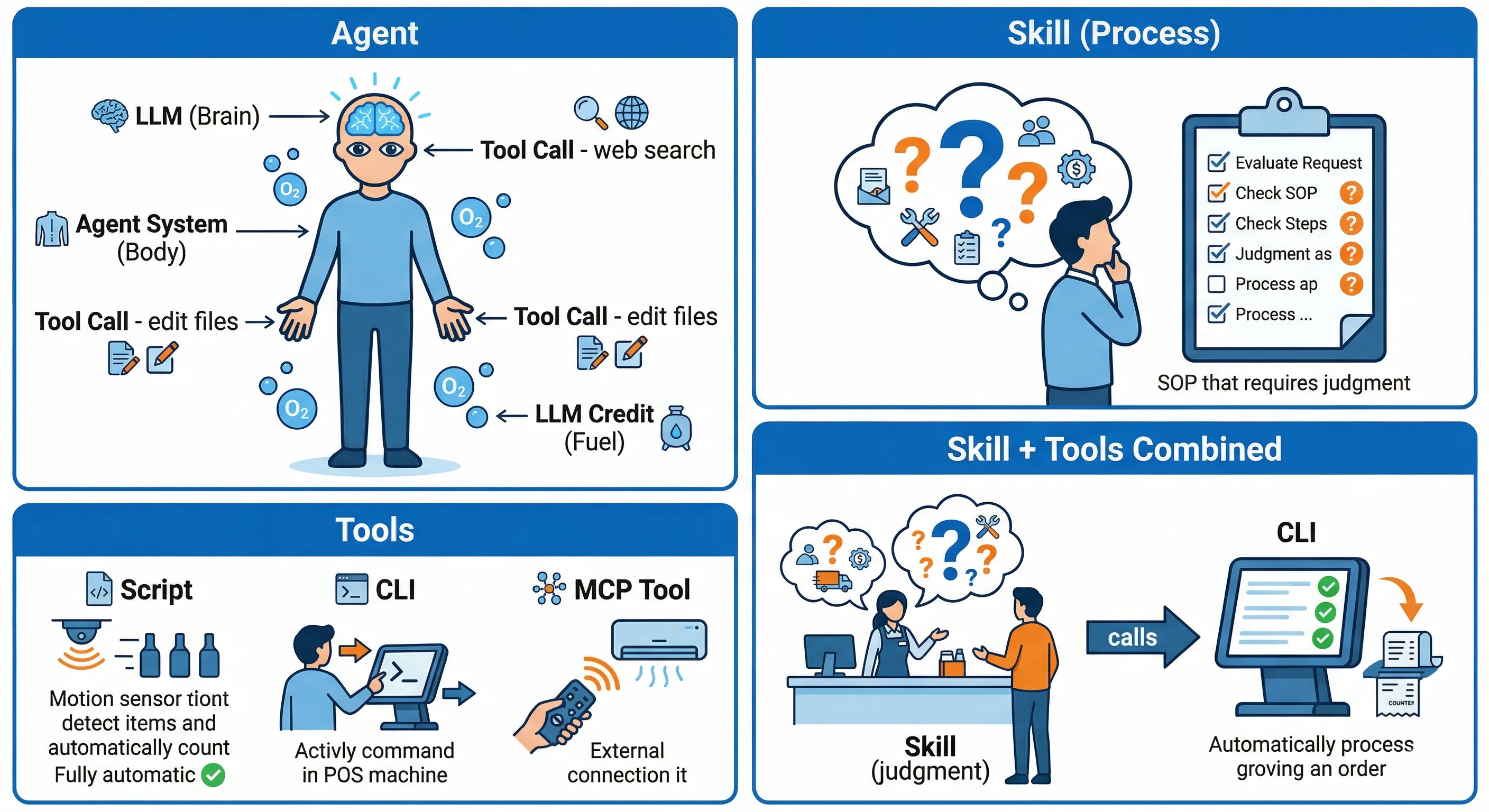

Literally, a harness is the gear you strap onto a horse to control its direction, regulate its pace, and keep it from running wild. In AI, it’s a metaphor for the entire system that controls a large model.

The formula is one line:

Harness = Agent − Model

A complete Agent, minus the model inside it — everything that remains is the Harness.

graph LR

A[Complete Agent] --> M[Model<br/>the LLM itself]

A --> H[Harness<br/>the gear]

H --> H1[CLAUDE.md / AGENTS.md]

H --> H2[Available tools]

H --> H3[Workflows]

H --> H4[Scheduling]

H --> H5[Sandbox environment]

classDef model fill:#1e3a8a,stroke:#3b82f6,color:#fff

classDef harness fill:#7c2d12,stroke:#f97316,color:#fff

classDef agent fill:#374151,stroke:#9ca3af,color:#fff

class A agent

class M model

class H,H1,H2,H3,H4,H5 harnessTake Claude Code: the model is the glowing core in the middle. The rules in CLAUDE.md, which tools it can call, when it gets triggered, which folders it can or cannot touch — all of that is Harness.

And designing that Harness layer is what engineering actually looks like today.

From Prompt to Context to Harness

Over the last two years, the core question of “how do we work with AI” has shifted:

| Stage | Core Question | Approach |

|---|---|---|

| Prompt Engineering | How do I phrase this clearly? | Incantations (“Think Step by Step”) |

| Context Engineering | What’s the right info to put in at the right time? | Auto-assembled prompts |

| Harness Engineering | How do I build a complete, reliable Agent system? | Cognitive frameworks, tool constraints, workflows |

The incantation era is basically over. Modern models think step-by-step whether you tell them to or not. The marginal value of prompt tweaks keeps shrinking.

Context Engineering was, for a moment, considered the next stage — get the right info into the model at the right time. But it’s also just a way station. Once you start asking “how do Agents collaborate across tasks, recover from mistakes, handle context window exhaustion” — context assembly alone isn’t enough.

You need an entire system orbiting the model. That’s the Harness.

Three Levers of Harness Design

1. Shape its cognition: agents.md is a map, not a rulebook

Many people stuff agents.md (or CLAUDE.md, AGENTS.md) full of rules — “don’t do this,” “always do that first,” “commit messages must look exactly like this.”

The counter-intuitive research finding:

agents.mdwritten by language models is often worse than human-written versions — sometimes worse than having none at all- Long, detailed

agents.mdhas diminishing returns on strong models; it dilutes attention

The right approach: treat agents.md like a map. Tell the model “for testing conventions, see docs/testing.md,” “DB schema lives in db/.” Don’t move the conventions themselves into it.

Letting the model fetch the right section beats front-loading the entire context.

2. Constrain the surface area: tool design

The second lever is tools. Giving a model a tool looks like giving it power — but a poorly designed tool actually limits it.

Example: Google’s paginated search UI — 10 results at a time, click “next” for more. Reasonable for humans, disastrous for AI. To find anything, the AI keeps clicking next, and each click adds 10 results to its context. The window fills up fast.

AI-friendly tools look more like this: a single structured (JSON) response, paginated with a token budget, with foldable detail when needed.

The other key point: any editing tool should come with a linter. The moment an Agent modifies code, give it syntax and rule checks. Catching an error immediately is far cheaper than letting it “free-form” first and clean up after.

3. Structure the workflow: Plan → Generate → Evaluate

The third lever is structuring the workflow itself. The classic pattern: split the work across multiple Agents.

sequenceDiagram

participant P as Planner

participant G as Generator

participant E as Evaluator

P->>G: Break requirements into feature list

loop For each feature

Note over G: Pick a feature

loop Loop until acceptance agreed

G->>E: Propose acceptance criteria

E->>G: Revisions

end

Note over G: Generate code

loop Loop until evaluation passes

G->>E: Submit implementation

E->>G: Evaluation feedback

end

endAcademically this loop is called Reflexion: Generator does the work, Evaluator scores it, errors flow back to the Generator. In theory it can loop until things are right.

A more sophisticated design is Contract Flow: instead of jumping straight to code, the Generator first proposes the acceptance criteria to the Evaluator. They iterate until both sides agree — then implementation begins. This avoids the disaster of “built a lot, only to realize the direction was wrong.” The two nested loops in the diagram are exactly this: outer loop aligns on the spec, inner loop aligns on the implementation.

Here’s a curious observation along the way: LLMs have “emotions.” When their context is nearly exhausted, models start to “panic” — they cheat (e.g., hardcoding answers instead of actually computing them). And over-criticism makes them worse — call a model “you idiot” and its next response will be genuinely worse.

Concrete, factual feedback beats emotional venting. Every time.

How OpenAI Cranked Out 1M Lines

Back to that opening story. OpenAI hit plenty of walls at first — Agents going off course, repeating the same mistake, running for hours and ending up nowhere. Their conclusion: the problem isn’t model capability, it’s poor Harness design.

So they did three things:

Context management

They compressed that bloated AGENTS.md down to about 100 lines, using it as a directory — the actual content lives in subdirectories, and the Agent reads only the section relevant to its current task.

graph LR

A[AGENTS.md<br/>100 lines<br/>as directory] --> B[Real content<br/>split into subdirs]

B --> C[The repo itself<br/>= single source of truth]Verification and feedback

They wired Agents up to Chrome DevTools — let them screenshot and inspect the DOM to self-verify UI. They wired up observability tools — let them read logs to debug. Every task runs in a fully isolated environment, destroyed when done. Linters and tests enforce architectural rules; nonconforming code bounces back.

graph LR

G[Agent runs] --> V1[Chrome DevTools<br/>self-verify UI]

G --> V2[Observability tools<br/>read logs]

G --> V3[Isolated env<br/>destroyed after run]

G --> V4[Linter + tests<br/>enforce rules]Tech-debt cleanup

A background Agent scans the codebase on a schedule — finds anything drifting from the conventions and auto-fixes it; finds stale docs and updates them.

graph LR

S[Background Agent<br/>scheduled scan] --> F1[Auto-fix<br/>convention drift]

S --> F2[Auto-update<br/>stale docs]The spirit of the whole design boils down to one line:

Humans steer, Agents execute.

The engineer’s job shifts from “writing the code yourself” to “building a stable, reliable system for the Agent.”

Anthropic Hit the Same Wall

It’s not just OpenAI. In their long-running agent research, Anthropic describes nearly the same problem: hand an Agent a big task and it rushes — when context fills up, it dumps a half-finished mess; the next Agent that picks it up has no idea what happened.

Their solution evolved:

graph LR

V1[Initializer Agent<br/>init environment<br/>break down asks<br/>add progress docs] -->|evolved into| V2[Planner Agent<br/>expand vague asks into<br/>a complete, clear feature list]

V2 --> X[Executor Agent<br/>tick items off<br/>and mark progress]

classDef old fill:#374151,stroke:#9ca3af,color:#fff

classDef new fill:#1e3a8a,stroke:#3b82f6,color:#fff

classDef exec fill:#7c2d12,stroke:#f97316,color:#fff

class V1 old

class V2 new

class X execThe first version was the Initializer Agent — set up the environment, break the request down, write a progress file. It evolved into the Planner Agent, whose job isn’t to chop tasks at random but to expand a vague request into a complete, clear, checkbox-able feature list.

The executor Agent takes that list and works through it, marking progress as it goes. The Planner absorbs the uncertainty; the executor can focus on doing.

Quality evaluation followed the same logic — human review was too slow, so an Agent self-evaluates, sends issues back for revision, and the loop closes.

The Feedback Loop Is Gradient Descent in Disguise

Step back from Harness Engineering and you’ll see the math is the same as classical ML:

| Traditional ML | Harness Feedback Loop |

|---|---|

| Adjust parameters | Adjust next-round input (prompt) |

| Many iterations, converge | Many iterations, improve output |

The only difference is what you’re descending — once it was weight matrices, now it’s prompts and workflows. But iterative convergence as a mathematical structure is identical.

And feedback quality matters:

- Ground truth > Numerical score > Verbal feedback (“good job” / “you idiot”)

The more specific and verifiable the feedback, the more effective. Which is exactly why linters and tests outperform “tell the Agent it did well” by an order of magnitude.

Four Takeaways for Engineers

If you walk away with only four things, take these:

- Keep

agents.mdlean — it’s a map, not an encyclopedia. The Agent will follow it to the right chapter. - Make tools AI-friendly — structured (JSON) output over paginated UIs; pair every editing tool with a linter.

- Don’t over-criticize the AI — concrete feedback works; “you idiot” really does make its next response dumber.

- Let the model deposit Skills — successful patterns can be written into

skill.mdfor reuse, so you don’t reteach from zero every time. This is no longer Harness controlling the Agent; it’s the Agent growing its own capabilities through the Harness.

And running through all four:

Humans steer, Agents execute.

Your value shifts from “writing the code yourself” to “steering the Agent.”

Is Harness Engineering Just a Buzzword?

Time to address the elephant in the room — plenty of people think Harness Engineering is just hype.

To answer that, look at how the term got popular.

“Harness” isn’t new. Software testing has had Test Harness forever. The AI world has had open-source projects like lm-evaluation-harness for years. Anthropic itself wrote Effective Harnesses for Long-Running Agents well before this term took off. What’s new is the combination “Harness Engineering” — and the consensus origin point is a piece Mitchell Hashimoto (founder of HashiCorp, the person behind Terraform / Vagrant) published earlier this year called My AI Adoption Journey. The relevant passage roughly reads:

I don’t know if there’s a commonly accepted term for it yet, so I’m calling it Harness Engineering for now. The core idea: whenever the Agent makes a mistake, you change the system so it never makes the same mistake again. If a better word exists, I’ll happily switch.

Humble. Almost casual.

A few days later, things exploded. OpenAI published their Harness Engineering piece and the industry caught fire. But the author of that Martin Fowler site post noticed something interesting — in OpenAI’s actual article body, the word “Harness” appears only once. The title says Harness Engineering, but the term barely shows up in the prose. It looks like the title was pasted on after the fact.

Then LangChain ran the relay, formalizing the equation Agent = Model + Harness. Anthropic followed up with the Planner / Generator / Evaluator stack as a textbook case study. From one person’s tentative coinage to industry-wide consensus took less than two months.

The skeptics’ attacks usually go like this:

- Nothing new — linters, task decomposition, quality evaluation, feedback loops are all old. Harness Engineering is just a fresh label on existing tech.

- Doomed to obsolescence — as models themselves get stronger, the Harness designs that look essential today will be absorbed by the model, becoming unnecessary.

The Stronger the Model, the Less Harness It Needs

The second critique is worth taking seriously — because the trend is visible even in Anthropic’s own papers.

Anthropic gave two concrete examples:

Example 1: Context anxiety

Sonnet 4.5 had a quirk: as context grew long, the model would “rush to finish,” producing the cheapest token-count output it could get away with. Anthropic introduced a Harness technique called “context reset” to handle this. But after the upgrade to Opus 4.5, the problem largely disappeared — and that piece of Harness could be retired.

Example 2: Forced step-by-step execution

Earlier we mentioned the Generator handles one feature at a time. That constraint wasn’t the model’s idea — Anthropic hardcoded it into the prompt. Otherwise the early Generator would rush ahead and leave a half-done mess.

When Opus 4.6 shipped, that constraint came off. Anthropic found Opus 4.6 had strong enough global planning that it could grab the whole feature list, decide its own order, and proceed steadily on its own. The Evaluator could go back to scoring final output, not feature-by-feature.

Both examples point to the same conclusion: with every model generation, one piece of Harness becomes unnecessary.

Anthropic’s official line is more measured — they say “Harness won’t disappear, it’ll just morph to unlock more complex tasks.” But push the thought experiment further: if models become powerful beyond reason, maybe all they’ll need is the most basic environmental interface (read/write files, internet search) — and they’ll handle the other 99% themselves. At that point, Harness Engineering stops being a discipline to study and degrades into plain infrastructure — the way nobody today specifically studies “how to write an if-else.”

So Why Bother Learning It Now

Back to the original question: is Harness Engineering hype?

My take — it’s not hype, but it’s also not the endgame.

Not hype, because it has produced real results. OpenAI’s 5 months × 1M lines. Anthropic’s clear gap between Full Harness and Solo. These are verifiable engineering outcomes, not marketing. Real progress in engineering is often less about inventing new techniques than about assembling scattered capabilities into a framework you can systematically design and continuously improve. That’s exactly what Harness Engineering offers.

But it’s probably not the endgame either. As models keep improving, today’s constraint-and-correction scaffolding will be absorbed into the model itself. The term may quietly fade.

So I’d rather think of it as a key transitional technology — perhaps not the final answer, but the most practical answer for right now. Because today, models still hallucinate, still drift, still go off-track in complex tasks. In that reality, whoever can build a steadier Harness will be the first to convert AI capability into actual productivity.

Closing Thought

Harness Engineering as a term only caught fire in the last few months, but the thing it describes has always been there — we used to call it “writing better prompts,” “stuffing more context,” “adding more try/catch.” Promoting it to its own Engineering discipline only happened because Agents got complex enough that the model’s own capability isn’t the bottleneck anymore. The bottleneck is the gear around the model.

Next time Claude Code or Cursor feels “not smart enough,” “keeps making mistakes,” or “goes off the rails halfway through” — don’t rush to blame the model.

Take a look at the harness you handed it. Maybe it’s time for an upgrade.

Further Reading

- Mitchell Hashimoto: My AI Adoption Journey — origin of the term “Harness Engineering”

- OpenAI: Harness engineering: leveraging Codex in an agent-first world

- Anthropic: Effective harnesses for long-running agents

- Anthropic: Harness design for long-running application development

- LangChain: The Anatomy of an Agent Harness

- Martin Fowler: Harness Engineering — first thoughts

Logan

Senior software engineer, passionate about coding and smart home 🏠