Not Just Another AI Agent: Hermes vs OpenClaw, A Complete Breakdown

After two months with OpenClaw and a deep dive into Hermes Agent's memory and learning system, the design philosophy gap between the two turned out to be much larger than expected. Writing this down for future reference.

Why I Looked Into This

I’ve been using OpenClaw for nearly two months — it’s become a regular part of handling daily tasks. When Hermes Agent (NousResearch) started making the rounds, billed as “an agent that gets smarter on its own,” I got curious about how it actually differed from OpenClaw. This isn’t a claim that one is better than the other — it’s an attempt to understand what each is actually suited for.

Core Differences

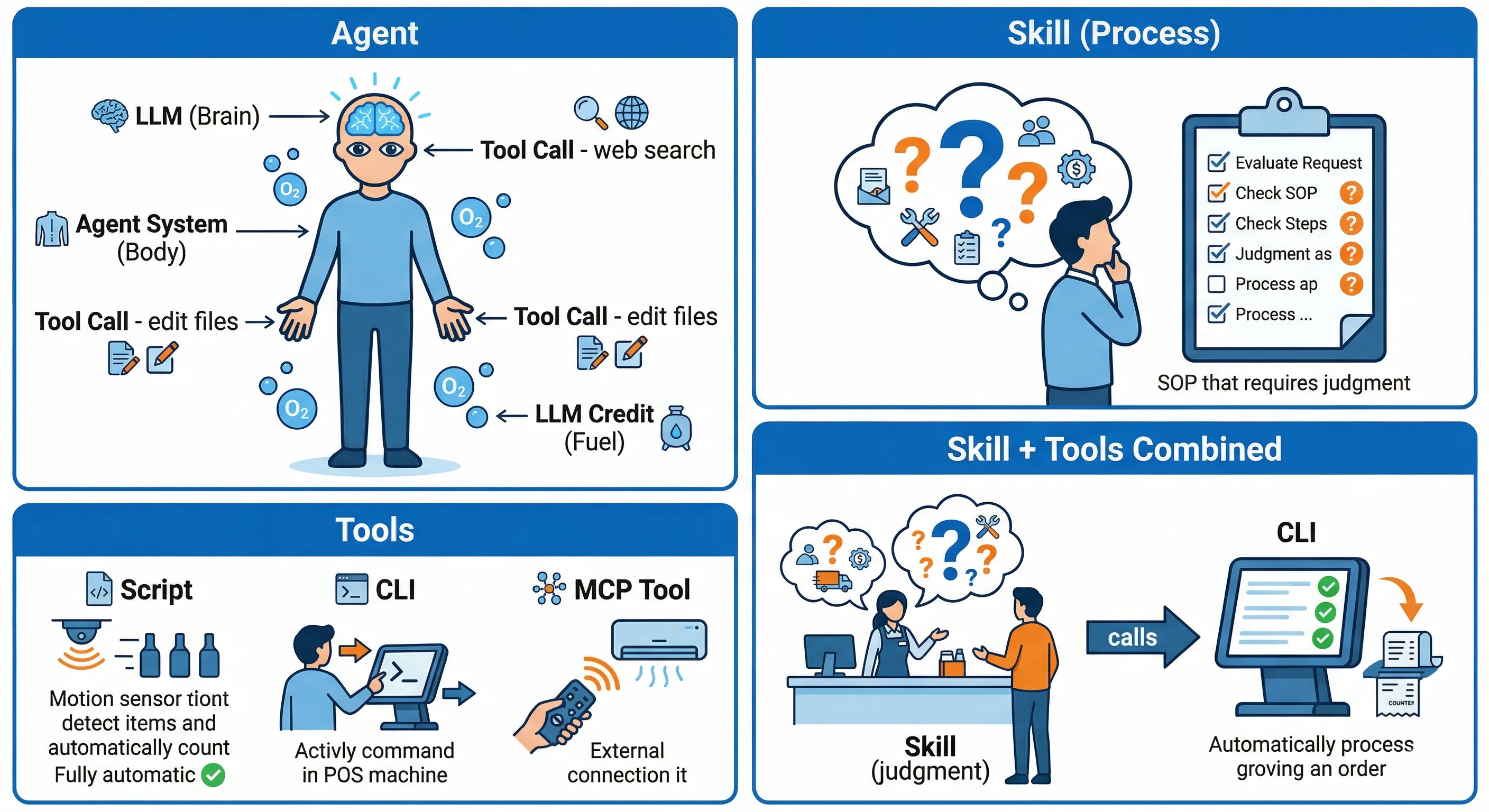

The design direction of these two frameworks diverges more than I expected.

Hermes Agent is a ready-to-run product that claims to work without any configuration. Its standout feature is a built-in self-improving loop: when an agent completes a complex task (5+ tool calls), it automatically creates or updates a skill. The next time a similar task comes up, it runs faster and more accurately.

OpenClaw starts from a different premise. It’s more of a toolbox for technical users — every component is designed to be composed freely. The Skills system, ACP protocol, and multi-node support all exist to let people extend and integrate on their own terms.

Feature Comparison

| Hermes | OpenClaw | |

|---|---|---|

| License | Apache 2.0 | MIT |

| Models | 200+ | 35+ |

| Memory | Built-in MEMORY.md (capped) + FTS5 session search; Honcho and others optional | MEMORY.md (M2M) + daily files + nightly consolidation |

| Learning | Self-creates/updates skills | Passive feeding |

| Deployment | Serverless, runs on a $5 VPS | Requires a persistent always-on machine |

| Developer | NousResearch | Peter Steinberger (joined OpenAI in Feb 2026) |

Hermes’ deployment cost is essentially zero — serverless mode on Daytona or Modal lets it sleep when idle. OpenClaw requires a machine that’s always on, which adds up in electricity costs over time.

The learning mechanism represents the biggest philosophical gap between the two, but ClawHub lets OpenClaw agents discover and install new skills on their own, which in practice closes the gap more than you’d think.

ClawHub is OpenClaw’s skill registry (think npm for Node.js), running as an independent service at clawhub.ai and also available as a built-in OpenClaw skill. Agents can automatically search, install, and publish skills packaged in

SKILL.mdformat at runtime. Search uses vector semantic matching — no manual installation required.

Hermes’ Memory and Learning System

After going through the documentation, Hermes’ learning mechanism turns out to be more complex than the marketing copy suggests — and more honest about its limitations.

Two-Layer Memory System

Hermes’ built-in memory has two layers, both stored in ~/.hermes/memories/:

| File | Purpose | Word Limit |

|---|---|---|

| MEMORY.md | Agent’s personal notes — environment facts, project conventions, lessons learned | 2,200 |

| USER.md | User profile — preferences, communication style | 1,375 |

At the start of each session, both files are frozen into a snapshot and injected at the top of the system prompt. Changes to memory during a session are written to disk immediately, but they don’t take effect until the next session — because the snapshot is taken at session start and doesn’t update mid-session.

The Four Parts of the Self-Improving Loop

1. Periodic Nudge — Actively scans recent behavior at fixed intervals and asks “what’s worth persisting?” This is the core mechanism that sets Hermes apart from other agents. No user input required — the agent organizes its own memory proactively.

2. Skill Creation — Trigger conditions are specific: complex tasks (5+ tool calls), error recovery, direct user correction, non-trivial workflows (shortcuts discovered the hard way). Once triggered, reusable Markdown files are created in ~/.hermes/skills/.

3. Skill Self-Improvement — When a better approach is found during use, it updates via patch (only old string + new string), not a full replacement. This avoids breaking parts that were already working.

4. Session Search — All conversations are stored in SQLite (FTS5 full-text search), retrievable at any time and injected into context after LLM summarization. ⚠️ Worth flagging: FTS5 is keyword search, not semantic search. If the words in a question differ from the words used when something was recorded, related concepts might not surface — even if conceptually connected. This is also why Hermes currently avoids vector embeddings: cost and hardware overhead.

graph TD

A[Session End] --> B[Periodic Nudge<br/>Active scan at fixed intervals]

A --> E{"Complex task?<br/>5+ tool calls"}

B --> C{"Worth persisting?"}

C -->|No| Z[End]

C -->|Yes| D[Write to MEMORY.md<br/>or USER.md]

D --> Z

E -->|No| Z

E -->|Yes| F{"Trigger condition met?<br/>Error recovery / User correction / Shortcut"}

F -->|No| Z

F -->|Yes| G[Create .md Skill file<br/>skills/ directory]

G --> H[Use Skill]

H --> I{"Better approach found?"}

I -->|Yes| J[Patch update<br/>not full replacement]

J --> K[Next Session]

I -->|No| K

K --> L[FTS5 full-text search<br/>Session Archive]

L --> M[LLM Summarization]

M --> N[Inject into Context]

N --> Z

style A fill:#e1f5fe

style G fill:#f1f8e9

style K fill:#fff3e0An Honest Assessment: How Well Does Hermes’ Learning System Actually Work?

The research also turned up a fair number of limitations and criticisms:

“What’s being called ‘self-learning’ is fundamentally just structured note-taking + CRUD. Skills are system prompt injection with a file operation layer on top — not real AI learning.”

— r/LocalLLaMA (original post)

Key limitations:

- Honcho is off by default: Honcho is a third-party open-source memory service developed by Plastic Labs. It uses LLM dialectical reasoning to continuously build a semantic user model across sessions — when enabled, the agent automatically retrieves memories before conversations and syncs summaries after. It’s off by default because it requires installing the

honcho-aipackage and configuring a Honcho Cloud API key. Many users discovered memory wasn’t working and assumed something was broken; the real issue is that the documentation isn’t clear enough. - No review gate: Skills are written automatically with no human oversight. Once a bad workaround gets recorded, it keeps getting reinforced — no version control.

- Requires ramp-up to deliver value: The first 20 tasks show almost no benefit. The episodic memory needs to fill up before the effect becomes noticeable (r/AISEOInsider).

- Skill drift risk: Multiple agents repeatedly patching the same skill can introduce conflicting edits, with no conflict detection.

- Inconsistent skill quality: Some users report “I kept training it, but it kept making the same mistakes.” The community workaround is marking skills as “locked” or “user-authored” to prevent overwrites — not a perfect solution. Some people have written a dedicated Claude Code skill specifically to audit Hermes skill files.

But there are real results too: One user reported a 40% speedup on repetitive research tasks after 3 skills were established (r/openclaw).

Bottom line: Hermes’ learning system genuinely works, but it’s been over-marketed. The substance is “the agent writes useful notes to itself and pulls them out on the next run” — not the kind of autonomously evolving AI you’d see in science fiction.

What the Community Is Saying

“OpenClaw can do anything Hermes can do, but you have to set it up yourself. Hermes comes like this out of the box. Hermes is more accessible to non-technical users.”

— r/openclaw (original post)

“Using OpenClaw feels like playing with a toy… the architecture is too bloated, it gets messy to work with. Hermes is much more stable — it doesn’t break every update, and the memory actually works.”

— r/hermesagent (original post)

“OpenClaw is strong on multi-channel automation and workflows; Hermes is better for long-term memory and learning. Rather than choosing one, use both.”

— r/AgentsOfAI (original post)

Three different voices, same conclusion: Hermes is easier to get started with but has less depth than OpenClaw; OpenClaw requires setup but has a higher ceiling.

Practical Use Cases

If cloud cost matters and you want something running quickly with the agent maintaining itself, Hermes is the better fit.

If full control and customization matter — multi-node support, strict security requirements — OpenClaw is more solid.

These aren’t mutually exclusive choices. Many people run Hermes for daily tasks and OpenClaw for work that needs deep integration.

For people already running OpenClaw, there’s almost no short-term migration incentive. Your existing automations and configurations are sunk costs, and Hermes’ learning system needs time to accumulate before it delivers value. Switching doesn’t make sense yet.

For people just trying to get started with a self-hosted agent, Hermes is actually the better starting point. Serverless deployment means you don’t need an always-on machine first. The cost of experimentation is low, and the out-of-the-box memory mechanism lets newcomers feel the value of an agent quickly.

State of the Ecosystem

GitHub numbers show a clear divergence in growth trajectories between the two frameworks:

| Hermes | OpenClaw | |

|---|---|---|

| Stars | 35,805 | 351,969 |

| Forks | 4,543 | 70,890 |

| Contributors | 214 | 30+ |

| Commits (last 30 days) | 2,463 (~82/day) | 100+ |

| First release | March 2026 (v0.2.0) | November 2025 (v0.1.1) |

OpenClaw’s community is roughly 10x larger than Hermes’ by star count, but most of that gap comes from time in the open — OpenClaw went public the day after the repo was created and has been accumulating community for over four months. Hermes created its repo in July 2025, then developed quietly for eight months before its first public release in March 2026. Reaching 35k stars in under a month is actually a fast growth rate. On development momentum, Hermes is far ahead — over 2,400 commits in the last 30 days, updates nearly every day, releasing roughly once a week.

This fits the context: OpenClaw founder Peter Steinberger announced he was joining OpenAI in February 2026, while also committing to establishing a nonprofit foundation to take over open-source governance. Whether the foundation can maintain the original development pace is still an open question. On the Hermes side, the migration tool already supports importing from OpenClaw — a clear signal to the community.

References

- Hermes Agent

- Hermes Agent Documentation

- OpenClaw GitHub

- OpenClaw Documentation

- Reddit: Hermes learning mechanism critique

- Reddit: I tried Hermes so you don’t have to

- Reddit: Hermes Self Evolving AI Agent

- Reddit: Hermes vs OpenClaw

- Reddit: Switched from OpenClaw to Hermes

- Reddit: OpenClaw vs Hermes Agent

Logan

Senior software engineer, passionate about coding and smart home 🏠